TL;DR

- Pick the language you know; weigh flexibility, crawling ability, ease, scalability, and maintainability.

- Python: most popular and beginner-friendly; strong libs (

BS4/Selenium/Scrapy) but can be slower. - Ruby & JS/Node:

Nokogiri/HTTPartyandPuppeteer/Cheerio; Node suits streaming / distributed but can be less stable. - PHP & C++: PHP works with

cURLbut weak at scale; C++ is fast / parallel yet costly; tools like Scrapingdog are an alternative.

In 2026, the best programming language for web scraping will be the one that is best suited to the task at hand. Many languages can be used for web scraping, but the best one for a particular project will depend on the project’s goals and the programmer’s skills.

Python is a good choice for web scraping because it is a versatile language used for many tasks. It is also relatively easy to learn, so it is a good choice for those who are new to web scraping.

C++ will allow you to build a unique setup of web scraping, as it offers an excellent execution solution for this task.

PHP is another popular language for web scraping. It is not as powerful as Java, but it is easier to learn and use. It is also a good choice for those who want to scrape websites built with PHP.

Other alternative languages can be used for web scraping, but these are the most popular choices. Let’s dive in and explore the best language to scrape websites with a thorough comparison of their strengths and limitations.

Which Programming Language To Choose & Why?

It’s important that a developer selects the best programming language that will help them scrape certain data that they want to scrape. These days, programming languages are quite robust when it comes to supporting different use cases, such as web scraping.

When a developer wants to build a web scraper, the best programming language to use is the one they are most comfortable with. Web data often comes in highly complex formats, and since the structure of web pages keeps changing frequently, software developers need to adjust their code accordingly.

Before choosing a language, developers often find the tech stack of the target website to understand whether it uses client-side rendering, APIs, or server-side frameworks, which helps guide their choice of language and tools.

When selecting the programming language, the first and main criterion should be proper familiarity with it. Web scraping is supported in almost any programming language, so the one a developer is most familiar with should be chosen.

For instance, if you know PHP. start with PHP only and later take it from there. It will make sure that you already have built-in resources for that language, as well as prior experience and knowledge about how it functions. It will also help you do web scraping faster.

The second consideration should be the availability of online resources for a particular programming language when it comes to solving bugs or finding standby coding solutions for different problems.

Apart from these, there are a few other parameters that you should consider when selecting any programming language for web scraping. Let’s have a look at those parameters.

Parameters to Select the Best Programming Language

Flexibility

The more flexible a programming language is, the better it will be for a developer to use it for web scraping. Before choosing a language, make sure that it’s flexible enough for your desired endeavors.

Operational ability to feed database

It’s also a highly important thing to look for when choosing a programming language.

Crawling effectiveness

The language you choose must have the ability to crawl through web pages effectively.

Ease of coding

It’s really important that you can code easily using the language you choose.

Scalability

Scalability is a technology stack. It determines the programming languages rather than the language itself. Some popular and battle-tested stacks that have proven to be capable of such scalability are Ruby on Rails (RoR), MEAN, .NET, Java Spring, and LAMP.

Maintainability

The cost of maintenance will depend on the maintainability of your technology stack, and what programming language you choose for web scraping. Based on your target and budget, you must choose a language that has maintainability that you can afford.

Top 6 Programming Languages for Effective & Seamless Web Scraping

Python

When it comes to web scraping, the Python programming language is still the most popular choice. This language is a complete product, as it can handle almost all the processes that are related to data extraction smoothly. It’s very easy to understand for beginner coders, and it’s also easy to use for web scraping. You will be able to get up to speed on web scraping with Python if you are new to this.

Core Features

- Easy to understand

- Follows Javascript in terms of availability of online community and resources

- Comes with highly useful libraries

- Pythonic Idioms works great for searching, navigating, and modifying

- Advanced web scraping libraries that come in really handy while scraping web pages

Read More: Tutorial on Web Scraping using Python

Built-In Libraries/Advantages

Selenium – It’s a highly advanced library of Python that helps a lot with data extraction and web scraping.

BeautifulSoup – It’s a Python library designed for really efficient and fast data extraction.

Scrapy – Scrapy is a popular web crawler and web scraping, which helps a lot with its twisted library and a set of amazing tools for debugging. Since Python provides an effective Scrapy, it is highly effective and popular for web scraping.

Limitations

- Too many options for data visualization can be confusing

- Can be slow due to being too dynamic and line-by-line execution of codes

- Weaker database access protocols

Ruby

Ruby is an open-source programming language. Its user-friendly syntax is easy to understand, and you will be able to practice and apply this language without any hassle. This language consists of multiple languages like Smalltalk, Perl, Ada, Eiffel, etc. Ruby is highly aware of the need for functional programming to be balanced with the help of imperative programming.

Core Features

- HTTParty, Pry, and NokoGiri enable the setting up of your web scraper without hassles.

- NokoGiri is a specific Rubygem, which offers XML, HTML, SAX, and Reader parsers with CSS and XPath selector support.

- HTTParty helps send the HTTP requests to the pages from where a developer wants to extract data. It furnishes all the HTML of the page as a string.

- Debugging a program is enabled by Pry

- No code repetition

- Simple syntax

- Convention over configuration

Ruby (programming language): What is a gem?

A Ruby Gem is a library that’s built by the Ruby Community. It can also be referred to as a package of codes, which are configured in a way that it complies with the software development in the Ruby style. These gems contain classes and modules that can be used in your applications. You can also use them in your code by installing them through RubyGems first.

RubyGems is a manager of packages for the Ruby language, and it provides a standard format for distributing programs and libraries.

Ruby Scraping (How To Do It And Why It’s Useful)

Ruby is popular for creating web scraping tools and for building internationalizing SaaS solutions. Ruby is used for web scraping a lot, as it’s an effective web scraping solution for extracting information for businesses. It is secure, cost-effective, flexible, and highly productive too. The steps of Ruby Scraping are-

- Creating the Scraping file

- Sending the HTTP queries

- Launching NokoGiri

- Parsing

- Export

Read More: Web Scraping with Ruby | Tips & Techniques for Seamless Scraping

Limitations

- Relatively slower than other languages

- Supported by a user community only, not a company

- Difficult to locate good documentation, especially for less-known libraries and gems

- Inefficient multithreading support

Javascript

Javascript is mainly built for front-end web development. Node.JS works as the web scraping language here that uses Javascript for functioning. Node.JS comes with libraries like Nightmare and Puppeteer that are used commonly for web scraping.

Read More: Puppeteer Web Scraping Using Javascript

Node.JS

Node.Js is a highly preferred programming language when it comes to web page crawling that practices dynamic coding activities. It also supports practices of distributed crawling.

Node.JS uses Javascript for conducting non-blocking applications that can help enhance multiple simultaneous events.

Framework

ExpressJS works as a flexible and minimal web application framework of Node.JS that has features for mobile and web applications. Node.JS also allows making easy and quick HTTP calls. It also helps traverse the DOM and extract data through Cheerio, which is an implementation of core jQuery.

Read More: Step-by-Step Guide for Web Scraping with Node JS

Features

- Conducts APIs and socket-based activities

- Performs basic data extraction and web scraping activities

- Good for streaming activities

- Has a built-in library

- Comes with a stable and basic communication

- Good for scraping large-scale data

Limitations

- Best suited for basic web scraping works

- Requires multiple code changes because of unstable API

- Not good for long-running processes

- Stability is not that good

- Lacks maturity

PHP

PHP might not be much of an ideal choice when it comes to creating a crawler program. You can go for the CURL libraries while web scraping with PHP, or extracting any kind of information such as images, graphics, videos, or any other visual forms. If you’re looking for help building tailored scraping solutions, exploring Custom PHP Development can be a smart move

Read More: Web Scraping with PHP

Core Features

- Helps transfer files with the help of protocol lists consisting of HTTP and FTP

- Helps create web spiders that can be utilized to download any information online

- Uses 3% of CPU usage

- Open-source

- Free of Cost

- Simple to Use

- Used 39 MB of RAM

- It can run 723 pages per 10 minutes

Limitations

- Not suitable for large-scale data extraction

- Weak multithreading support

C++

C++ offers an outstanding execution for web scraping with its unique setup for this task, but it can be quite costly to set up your web scraping solution with this programming language. Make sure that your budget suits using this language for scraping the web. This language shouldn’t be used if you are not highly focused on extracting data only.

Core Features

- Quite a simple user interface

- Allows for efficiently parallelizing the scraper

- Works great for extracting data

- Conducts great web scraping if paired with dynamic coding

- Can be used to write an HTML parsing library and fetch URLs

Limitations

- Not great for just any web-related project, as it works better with a dynamic language

- Expensive to use

- Not best suited for creating crawlers

Which is better for web scraping Python or JavaScript?

I would say Python is the better language for web scraping due to its ease of use. It comes with a large number of libraries and frameworks, and strong support for data analysis and visualization. Python’s BeautifulSoup and requests libraries are widely used for web scraping, and they provide a simple and powerful way to extract data from HTML documents.

But there is a catch in all of this noise. Python is very bad at handling concurrent threads. Your server will overload itself when you are scraping some websites at a very high volume. Python works in a synchronous mode which might be the only disadvantage of using Python in production scraper.

Example of Extracting title tag using requests and BS4.

import requests

from bs4 import BeautifulSoup

url = 'https://scrapingdog.com/'

# Send a GET request to the URL

response = requests.get(url)

# Parse the HTML content using Beautiful Soup

soup = BeautifulSoup(response.content, 'html.parser')

# Extract the title tag

title = soup.title.string

# Print the title

print(title)

On the other hand, Javascript is a programming language that can be used at the front end and at the back end too. With the combination of Cheerio and Axios, you can scrape any website in seconds. But the learning curve is steeper when it comes to javascript. And hence the beginner might get demotivated while scraping the website with Javascript. Javascript can also handle multiple requests with ease due to its asynchronous(task can be handled concurrently) nature. So, if you want to scrape millions of pages then Javascript will be the best choice.

Example of Extracting title tag using Axios and Cheerio.

const axios = require('axios');

const cheerio = require('cheerio');

const url = 'https://scrapingdog.com/';

// Send a GET request to the URL using Axios

axios.get(url)

.then(response => {

// Load the HTML content into Cheerio

const $ = cheerio.load(response.data);

// Extract the title tag

const title = $('title').text();

// Print the title

console.log(title);

})

.catch(error => {

console.error(error);

});

Alternative Solution: Readily Available Tools for Web Scraping

You can go for various open-source tools for web scraping that are free to use. While some of these tools require a specific amount of code modification, some don’t require any coding at all. Most of these tools have limitations to only scrape the page a user is on, and can’t be scaled to scrape web pages in thousands in an automated way.

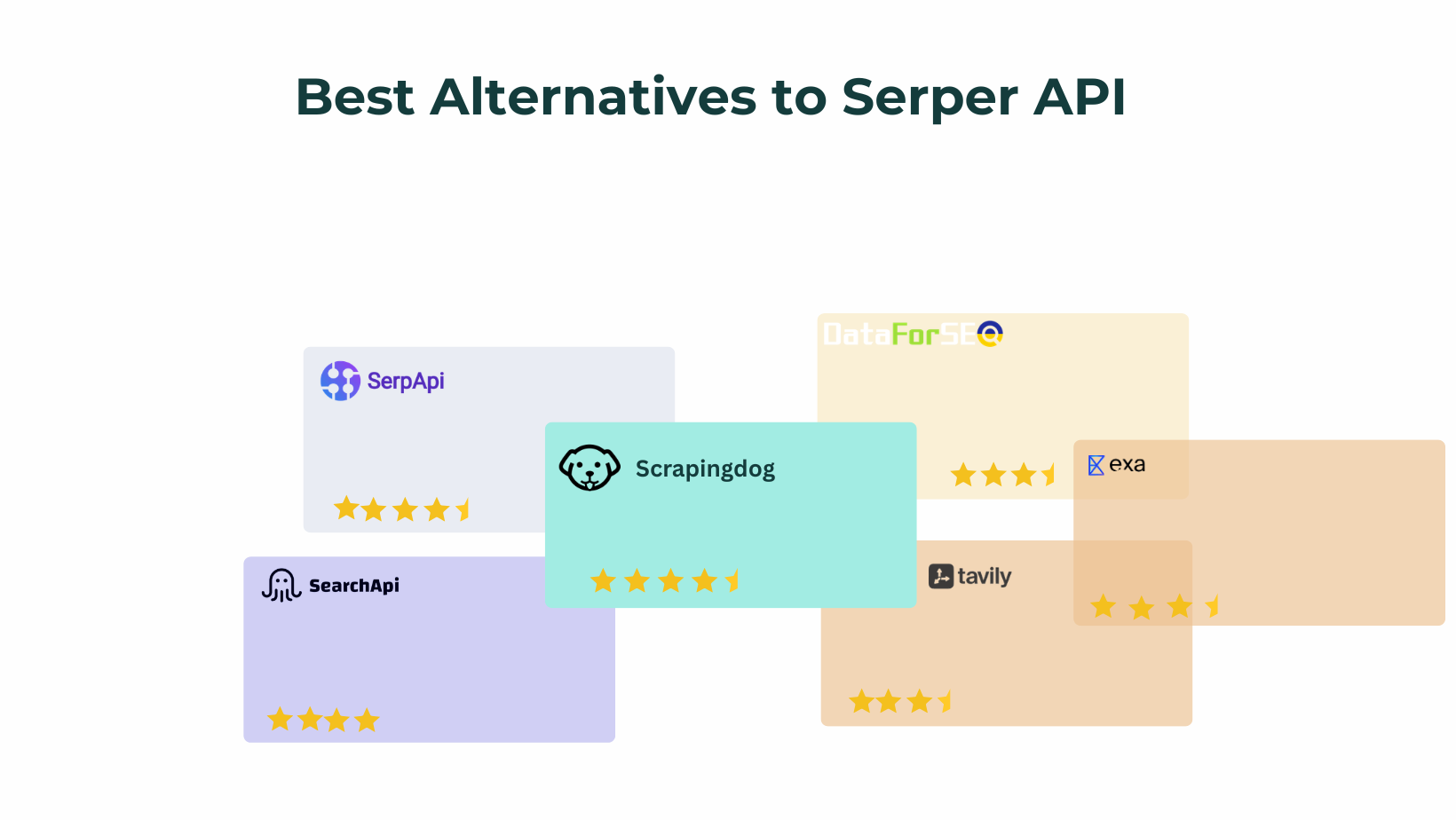

You can also use these readily available tools like Scrapingdog to work with external web scrapers. They can offer proxy services for scraping, or scrape the data directly and deliver it in the needed format. It allows for allocating time to other development priorities instead of data pulling. Especially companies with no developers or data engineers that can support data analytics can highly benefit from these readily available tools and data.

Here are 5 key takeaways:

There’s no single “best” language for web scraping the right choice depends on scale, speed needs, and project goals.

Python is the most popular option thanks to simple syntax and strong libraries like BeautifulSoup, Scrapy, and Selenium.

JavaScript (Node.js) works well for dynamic, JavaScript-heavy websites and browser automation.

Faster, compiled languages can improve performance but increase development complexity.

Familiarity matters most, using a language you know often leads to better results than chasing trends.

Frequently Asked Questions

Which language is fastest for web scraping?

Short Answer: Python. Python is the flexible and easy to learn. Moreover, it is fastest of all the programming languages.

Is PHP good for web scraping?

Yes, PHP is a back end scripting language. You can web scrape using plain PHP coding.

Can Python replace Java?

No, It is not possible for a Java developer to switch the codes in Python.

Is Python good for scraping?

Python has huge collection of libraries for web scraping. Hence, extracting data from python is suitable and fast.

Is Scrapy better than BeautifulSoup?

Scrapy is a more complex tool and thus can be used for large projects. On the other hand, BeautifulSoup can be used for small projects.