Google processes over 8.5 billion searches every day, making it the most valuable source of real-time market data on the internet. Whether you’re tracking competitor rankings, monitoring SERP features, or building an AI model, scraping Google search results is the fastest way to turn that data into actionable insights.

In this guide, we’ll build a Google search scraper from scratch using Python and BeautifulSoup, and then show you how to scale it without getting blocked.

Quick Answer: How to Scrape Google Search Results

Step 1 — Scrape HTML content from any Google URLhttps://www.google.com/search?q=your+keyword using Selenium for JavaScript-rendered pages.

Step 2 — Parse the HTML response using BeautifulSoup to extract titles, links, and descriptions from the result blocks.

Step 3— For production use, skip the DIY setup and use Scrapingdog’s Google SERP API. With just one API call, it returns clean, parsed JSON with organic results, Featured Snippets, PAA boxes, and more.

import requests

api_key = "your-api-key"

url = "https://api.scrapingdog.com/google"

params = {

"api_key": api_key,

"query": "web scraping",

"country": "us",

"advance_search": "true",

"domain": "google.com"

}

response = requests.get(url, params=params)

if response.status_code == 200:

data = response.json()

print(data)

else:

print(f"Request failed with status code: {response.status_code}")

That’s it. Keep reading for the full breakdown with detailed code examples

Why Even Scrape Google Search Results?

Competitive Intelligence — See which competitors rank for your target keywords and how they position themselves.

SEO & Rank Tracking — Automate rank tracking across hundreds of keywords, locations, and languages at scale.

Market Research — Google SERPs reflect real-time consumer intent, helping you spot trends before your competitors do.

Lead Generation — Extract business listings and websites for any industry query to build targeted lead lists fast.

AI & LLM Training — SERP data links to authoritative, real-world content that makes excellent training data for AI models.

Ad & SERP Monitoring — Track how Google’s SERP features like AI Overviews, Featured Snippets, and Shopping ads impact your organic visibility.

Why use Scrapingdog for scraping Google Search Results?

No Getting Blocked — Scrapingdog handles proxy rotation, header randomization, and browser fingerprinting so your requests never get flagged by Google.

Pre-Parsed JSON Response — No need to write fragile CSS selectors or deal with HTML parsing. Get clean, structured JSON with titles, links, descriptions, and more out of the box.

Location-Specific Results — Set a country or language parameter to extract geo-targeted SERPs, perfect for local SEO monitoring and international market research.

Handles SERP Features — Extracts not just organic results but also Featured Snippets, People Also Ask, AI Overviews, Shopping results, and more.

Scales Without Limits — Whether you’re making 100 or 100 million requests, the infrastructure scales with you with no rate-limiting headaches on your end.

Simple Integration — A single API call is all it takes. Works with any language, like Python, Node.js, PHP, or a plain cURL request.

Reliable Uptime — Built for production workloads with 99.9% uptime, so your data pipelines never go dark.

Requirements

I hope Python is already installed on your computer, if not then you can download it from here. Create a folder to keep Python scripts in it.

mkdir google

We will need to install two libraries.

selenium– It is a browser automation tool. It will be used with Chromedriver to automate the Google Chrome browser. You can download the Chrome driver from here.BeautifulSoup– This is a parsing library. It will be used to parse important data from the raw HTML data.pandas– This library will help us store the data inside a CSV file.

pip install beautifulsoup4 selenium pandas

Now, create a Python file. We will write our script in this file. I am naming the file as search.py.

Why Selenium

Scraping Google with Python and Selenium

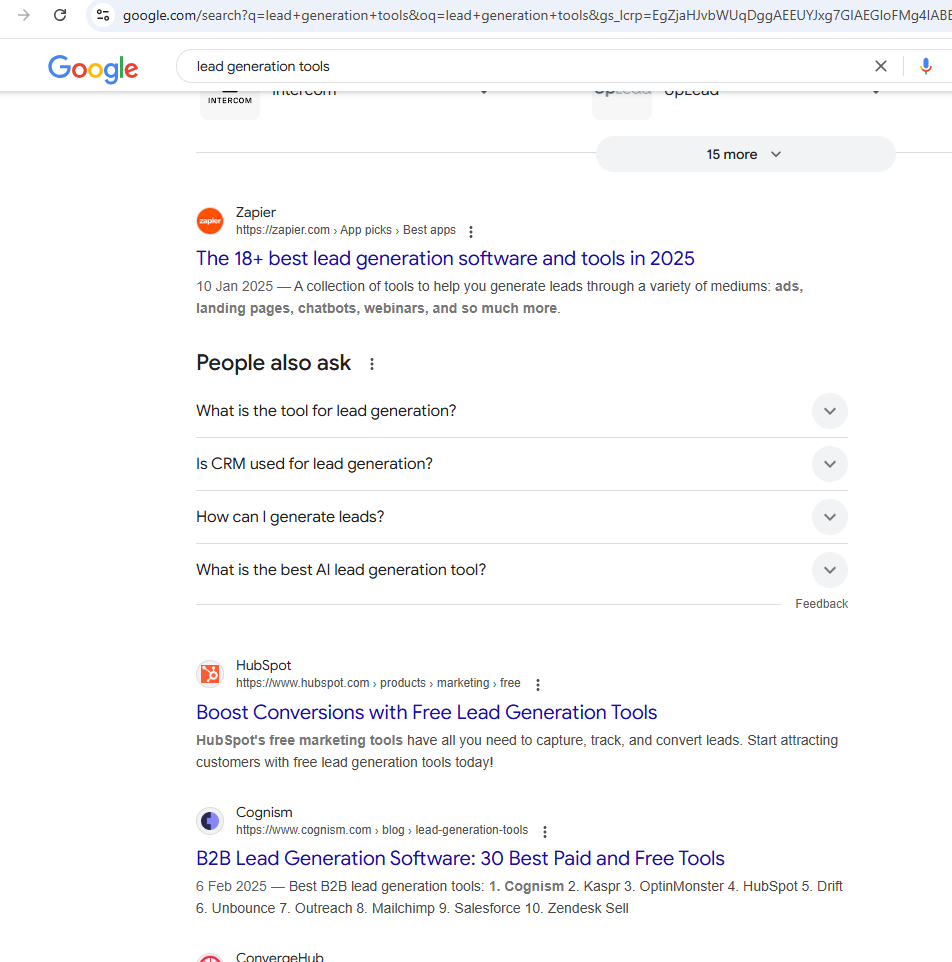

In this article, we are going to scrape this page. Of course, you can pick any Google query. Before writing the code let’s first see what the page looks like and what data we will parse from it.

The page will look different in different countries.

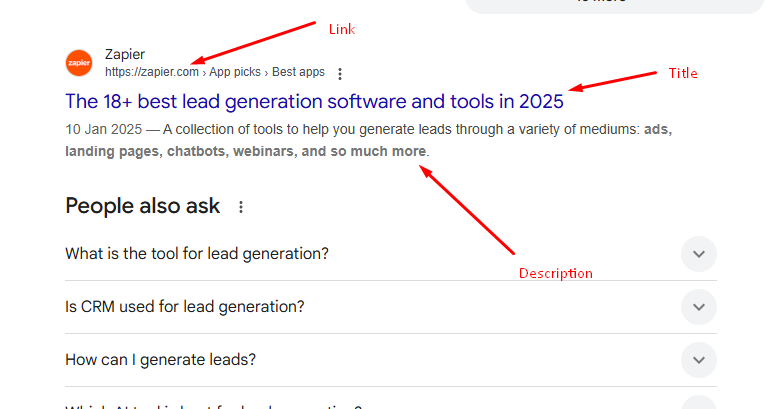

We are going to extract the link, title, and description from the target Google page. Let’s first create a basic Python script that will open the target Google URL and extract the raw HTML from it.

from selenium import webdriver

from selenium.webdriver.chrome.service import Service

from selenium.webdriver.common.by import By

import time

from bs4 import BeautifulSoup

# Set path to ChromeDriver (Replace this with the correct path)

CHROMEDRIVER_PATH = "D:/chromedriver.exe" # Change this to match your file location

# Initialize WebDriver with Service

service = Service(CHROMEDRIVER_PATH)

options = webdriver.ChromeOptions()

options.add_argument("--window-size=1920,1080") # Set window size

driver = webdriver.Chrome(service=service, options=options)

# Open Google Search URL

search_url = "https://www.google.com/search?q=lead+generation+tools&oq=lead+generation+tools"

driver.get(search_url)

# Wait for the page to load

time.sleep(2)

page_html = driver.page_source

print(page_html)

Let me briefly explain the code

- First, we have imported all the required libraries. Here

selenium.webdriveris controlling the web browser andtimeis for sleep function. - Then we have defined the location of our chromedriver.

- Created an instance of

chromedriverand declared a few options. - Then using

.get()function we open the target link. - Using

.sleep()function we are waiting for the page to load completely. - Then finally we extract the HTML data from the page.

Let’s run this code.

Yes yes, I know you got a captcha. Here I want you to understand the importance of using options arguments. While scraping Google you have to use — disable-blink-features=AutomationControlled. This Chrome option hides the fact that a browser is being controlled by Selenium, making it less detectable by anti-bot mechanisms. This also hides fingerprints.

from selenium import webdriver

from selenium.webdriver.chrome.service import Service

from selenium.webdriver.common.by import By

import time

from bs4 import BeautifulSoup

# Set path to ChromeDriver (Replace this with the correct path)

CHROMEDRIVER_PATH = "D:/chromedriver.exe" # Change this to match your file location

# Initialize WebDriver with Service

service = Service(CHROMEDRIVER_PATH)

options = webdriver.ChromeOptions()

options.add_argument("--window-size=1920,1080") # Set window size

options.add_argument("--disable-blink-features=AutomationControlled")

driver = webdriver.Chrome(service=service, options=options)

# Open Google Search URL

search_url = "https://www.google.com/search?q=lead+generation+tools&oq=lead+generation+tools"

driver.get(search_url)

# Wait for the page to load

time.sleep(2)

page_html = driver.page_source

print(page_html)

As expected we were able to scrape Google with that argument. Now, let’s parse it.

Parsing HTML with BeautifulSoup

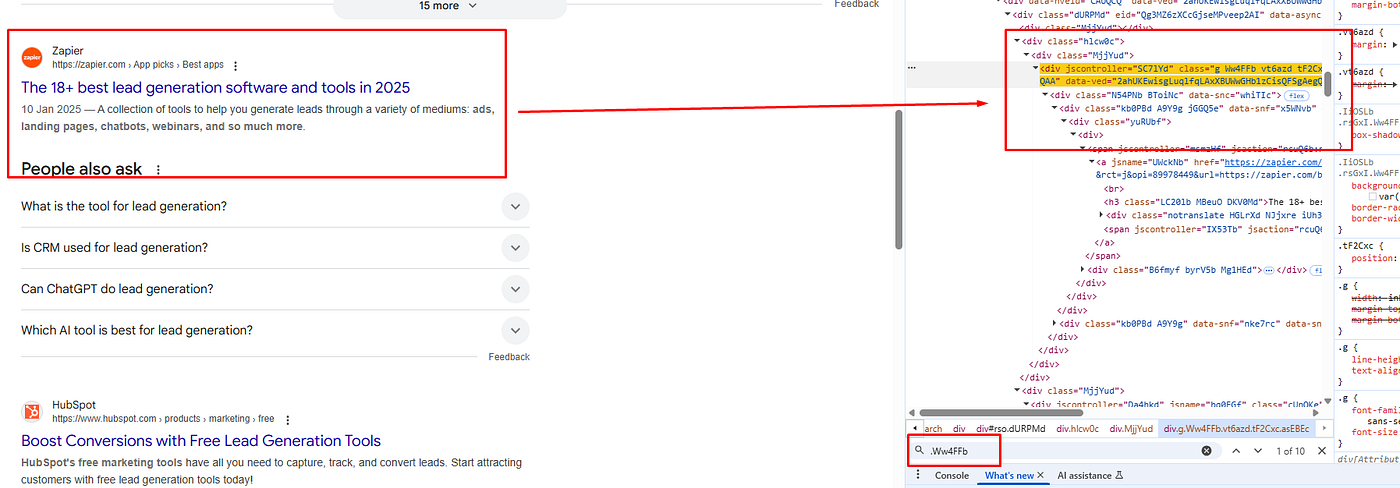

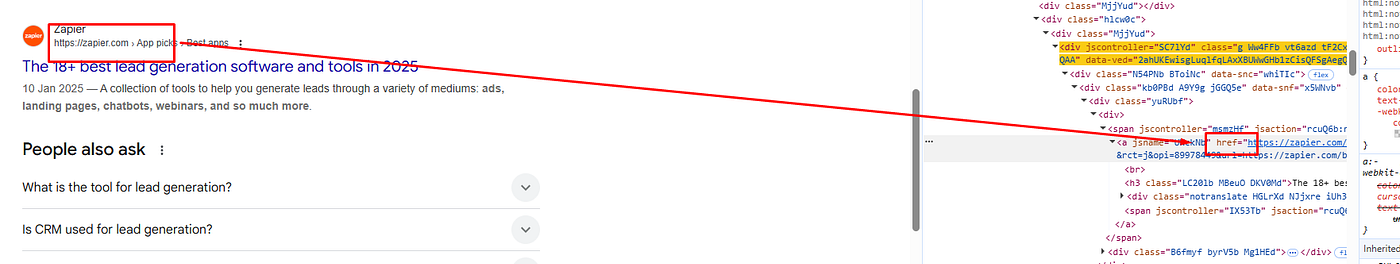

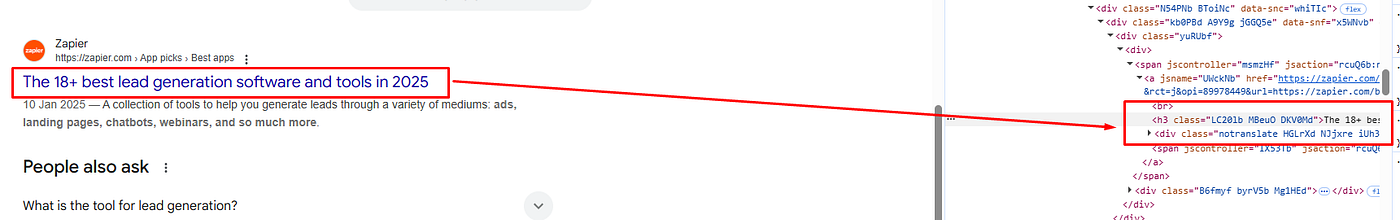

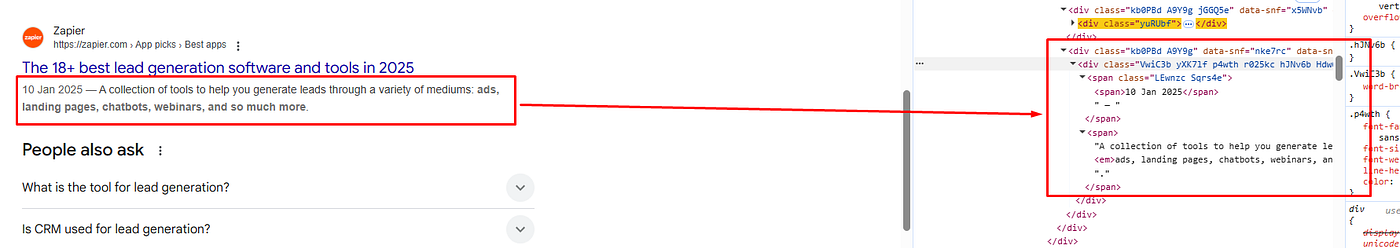

Before parsing the data we have to find the DOM location of each element.

All the organic results have a common class Ww4FFb. All these organic results are inside the div tag with the class dURPMd.

The link is located inside the a tag.

The title is located inside the h3 tag.

The description is located inside the div tag with the class VwiC3b. Let’s code it now.

page_html = driver.page_source

obj={}

l=[]

soup = BeautifulSoup(page_html,'html.parser')

allData = soup.find("div",{"class":"dURPMd"}).find_all("div",{"class":"Ww4FFb"})

print(len(allData))

for i in range(0,len(allData)):

try:

obj["title"]=allData[i].find("h3").text

except:

obj["title"]=None

try:

obj["link"]=allData[i].find("a").get('href')

except:

obj["link"]=None

try:

obj["description"]=allData[i].find("div",{"class":"VwiC3b"}).text

except:

obj["description"]=None

l.append(obj)

obj={}

print(l)

In the allData variable, we have stored all the organic results present on the page. Then using the for loop we are iterating over all the results. Lastly, we are storing the data inside the object obj and printing it.

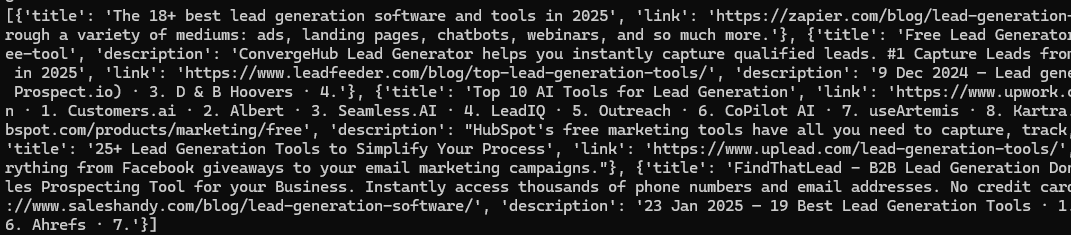

Once you run the code you will get a beautiful JSON response like this.

Finally, we were able to scrape Google and parse the data.

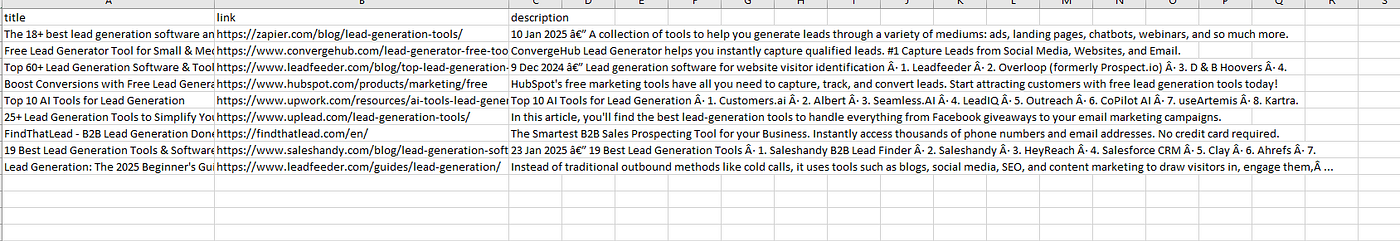

Storing data to a CSV file

We are going to use the pandas library to save the search results to a CSV file.

The first step would be to import this library at the top of the script.

import pandas as pd

Now we will create a pandas data frame using list l

df = pd.DataFrame(l)

df.to_csv('google.csv', index=False, encoding='utf-8')

Again once you run the code you will find a CSV file inside your working directory.

Complete Code

You can surely scrape many more things from this target page, but currently, the code will look like this.

from selenium import webdriver

from selenium.webdriver.chrome.service import Service

from selenium.webdriver.common.by import By

import time

from bs4 import BeautifulSoup

import pandas as pd

# Set path to ChromeDriver (Replace this with the correct path)

CHROMEDRIVER_PATH = "D:/chromedriver.exe" # Change this to match your file location

# Initialize WebDriver with Service

service = Service(CHROMEDRIVER_PATH)

options = webdriver.ChromeOptions()

options.add_argument("--window-size=1920,1080") # Set window size

options.add_argument("--disable-blink-features=AutomationControlled")

driver = webdriver.Chrome(service=service, options=options)

# Open Google Search URL

search_url = "https://www.google.com/search?q=lead+generation+tools&oq=lead+generation+tools"

driver.get(search_url)

# Wait for the page to load

time.sleep(2)

page_html = driver.page_source

soup = BeautifulSoup(page_html,'html.parser')

obj={}

l=[]

allData = soup.find("div",{"class":"dURPMd"}).find_all("div",{"class":"Ww4FFb"})

print(len(allData))

for i in range(0,len(allData)):

try:

obj["title"]=allData[i].find("h3").text

except:

obj["title"]=None

try:

obj["link"]=allData[i].find("a").get('href')

except:

obj["link"]=None

try:

obj["description"]=allData[i].find("div",{"class":"VwiC3b"}).text

except:

obj["description"]=None

l.append(obj)

obj={}

df = pd.DataFrame(l)

df.to_csv('google.csv', index=False, encoding='utf-8')

print(l)

Well, this approach is not scalable because Google will block all the requests after a certain number of requests. We need some advanced scraping tools to overcome this problem.

Limitations of scraping Google search results with Python

Although the above approach is great if you are not looking to scrape millions of pages. But if you want to scrape Google search at scale, then the above approach will fall flat, and your data pipeline will stop working immediately. Here are a few reasons why your scraper will be blocked.

- Since we are using the same IP for every request, Google will ban your IP, which will result in the blockage of the data pipeline.

- Along with IPs, we need quality headers and multiple browser instances, which are absent in our approach.

The solution to the above problem will be using a Google Search API like Scrapingdog. With Scrapingdog, you don’t have to worry about proxy rotations or retries. Scrapingdog will handle all the hassle of proxy and header rotation and seamlessly deliver the data to you.

You can scrape millions of pages without getting blocked with Scrapingdog. Let’s see how we can use Scrapingdog to scrape Google at scale.

Scraping Google Search Results with Scrapingdog

Now, that we know how to scrape Google search results using Python and Beautifulsoup, we will look at a solution that can help us scrape millions of Google pages without getting blocked.

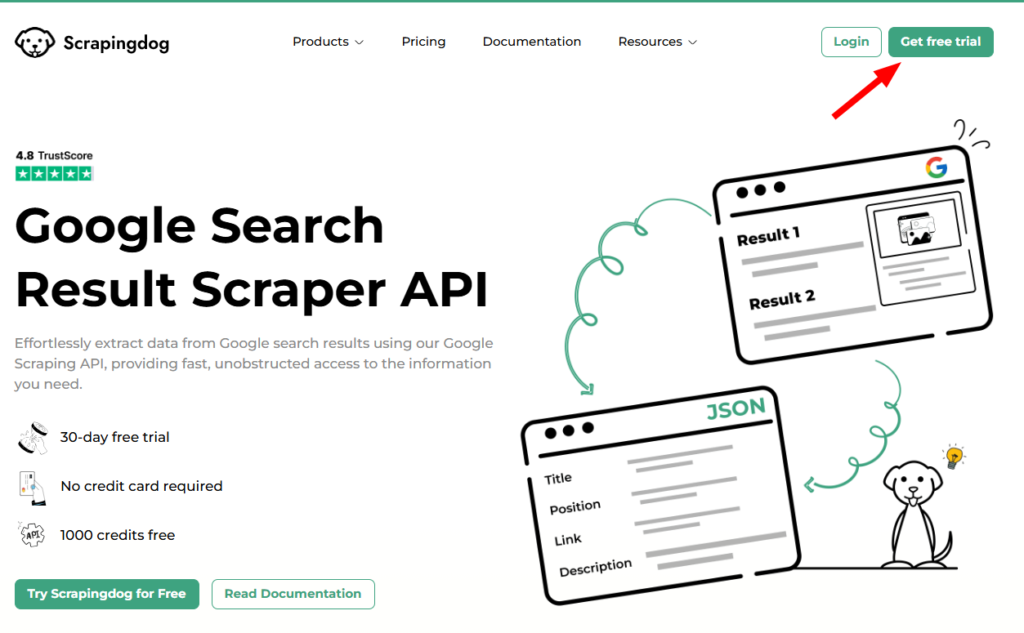

We will use Scrapingdog’s Google Search Result Scraper API for this task. This API handles everything from proxy rotation to headers.

You just have to send a GET request and in return, you will get beautiful parsed JSON data.

This API offers a free trial and you can register for that trial from here. After registering for a free account you should read the docs to get the complete idea of this API.

import requests

api_key = "Paste-your-own-API-key"

url = "https://api.scrapingdog.com/google/"

params = {

"api_key": api_key,

"query": "lead generation tools",

"results": 10,

"country": "us",

"page": 0

}

response = requests.get(url, params=params)

if response.status_code == 200:

data = response.json()

print(data)

else:

print(f"Request failed with status code: {response.status_code}")

The code is pretty simple. We are sending a GET request to https://api.scrapingdog.com/google/ along with some parameters. For more information on these parameters, you can again refer to the documentation.

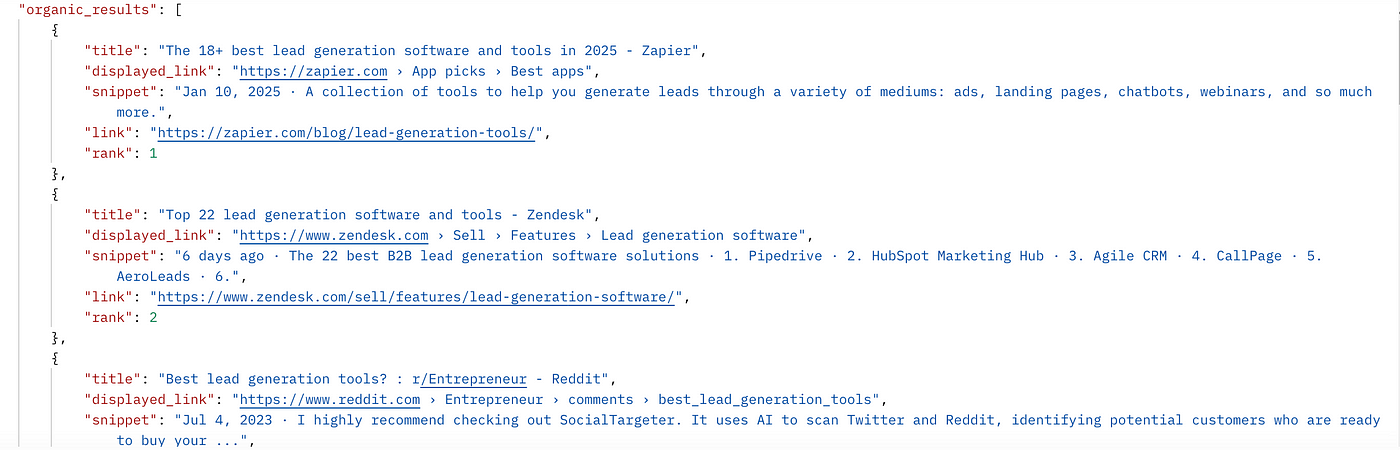

Once you run this code you will get a beautiful JSON response.

In this JSON response, you will get People also ask for data & related search data as well. So, you are getting full data from Google, not just organic results.

What if I need search results from a different country?

As you might know, Google shows different results in different countries for the same query. Well, I just have to change the country parameter in the above code.

Let’s say you need results from the United Kingdom. For this, I just have to change the value of the country parameter to gb (ISO code of UK).

You can even extract 100 search results instead of 10 by just changing the value of the results parameter.

Here’s a video tutorial on how to use Scrapingdog’s Google SERP API.⬇️

Note: Scrapingdog has recently launched an all-in-one search engine API.

This API gives filtered data from all major search engines (Google + Bing + Yahoo + DuckDuckGo).

We are calling it Universal SERP API. The advantage of using this API is that it allows you to take data in one API call, you don’t need to filter out repetitive results, and it is economical.

How To Scrape Google Ads using Scrapingdog's Search API

You can use the same API to extract competitors’ AD results as well!!

In the documentation, you can read about the 'advance_search' parameter. This parameter allows you to get advanced SERP results, and Google Ads results are there in it.

I have made a quick tutorial on this, too, to make you understand how the Scrapingdog’s Google SERP API can be used to get ADs data.⬇️

Is there an official Google SERP API to extract search results?

Google offers its API to extract data from its search engine. It is available at this link for anyone who wants to use it. However, the usage of this API is minimal due to the following reasons: –

The API is very costly — For every 1000 requests you make, it will cost you around $5, which doesn’t make sense as you can do it for free with web scraping tools.

The API has limited functionality — It is made to scrape only a small group of websites, although by doing changes to it you can scrape the whole web again which would cost you time.

Limited Information — The API is made to provide you with little information, thus any data extracted may not be useful.

Scrape Google Search Data Easily with Our Google Sheets Add-On (For Non- Developers)

If you are a non-developer and wanted to scrape the data from Google, here is a good news for you.

We have recently launched a Google Sheet add-on Google Search Scraper.

Here is the video

Here are 5 crisp key takeaways:

- Google Search results can be scraped to extract titles, URLs, descriptions, rankings, and other SERP insights useful for SEO, research, and market analysis.

- A basic Python setup using tools like Selenium and BeautifulSoup works for learning and small-scale scraping.

- Google actively detects scraping through IP behavior, headers, and browser fingerprints, making DIY scraping unreliable at scale.

- Scaling manual scrapers requires handling proxies, retries, CAPTCHA, and rendering. This adds significant engineering overhead.

- Using a SERP API like Scrapingdog simplifies the process by returning structured search data without worrying about blocks or infrastructure.

Conclusion

In this article, we saw how we can scrape Google results with Python and BS4. Then we used Google SERP API for scraping Google search results at scale without getting blocked.

Google has a sophisticated anti-scraping wall that can prevent mass scraping, but Scrapingdog can help you by providing a seamless data pipeline that never gets blocked. Scrapingdog also provides a Bing Search API ,Baidu Search API to scrape search results from this search engine.

If you like this article, please do share it on your social media accounts. If you have any questions, please contact me at [email protected].

Additional Resources

- How to Scrape Google AI Mode using Python

- Scrape Bing Search Results using Python (A Detailed Tutorial)

- How to Scrape Baidu Search Results using Python

- Web Scraping Google Jobs using Python

- Web Scraping Google News using Python

- How To Web-Scrape Google Autocomplete Suggestions

- 10 Best Google SERP Scraper APIs

- 6 Best Rank Tracking APIs

- Web Scraping Google Maps using Python

- Web Scraping Google Images using Python

- Web Scraping Google Scholar using Python

- Web Scraping Google Shopping using Python

- How to Scrape People Also Ask using Google Search API

- How to Scrape Google Local Results using Python & Scrapingdog API

- How to scrape Google AI Overviews using Python